Analyzing security event logs

Archived

3 Tasks

20 mins

Scenario

Front Stage is concerned whether someone’s user name and password may have been compromised, perhaps through a phishing attack. FSG has decided to enforce the restriction that everyone who accesses the Booking application must enable geolocation. If geolocation is not enabled, access to the Booking application is not granted.

FSG wants to write out each user’s pxRequestor pxLatitude and pxLongitude when logging custom security events. FSG also wants to record the timestamp for each event in a way that simplifies the computation of velocity, whether miles-per-hour (mph) or kilometers-per-hour (kph). If the speed at which the same user would have to travel to have logged two security events exceeds the realm of possibility, FSG wants to know who that user is and where the two events occurred.

It is unnecessary to implement a denial of access to the Booking application if geolocation is not enabled. Instead, devise a method to efficiently compute the velocity between two events recorded in a PegaRULES-SecurityEvent.log file for the same user. If the velocity exceeds a reasonable value, output the two events.

This challenge requires the use of the Linux Lite VM to complete.

Detailed Tasks

1 Reveiw solution detail

The solution to this exercise requires the installation of Apache Spark and Rumble.

- Read the instructions to install Rumble: Installing Spark.

- Download Apache Spark.

- Download Rumble (such as

spark-rumble-1.0.jar).

2 Install and configure Apache Spark and Rumble

- Download Apache Spark using a browser that runs inside the VM. Per instructions, move the downloaded file to /usr/local/bin by executing the following command.

tar zxvf spark-2.4.4-bin-hadoop2.7.tgz

sudo mv spark-2.4.4-bin-hadoop2.7 /usr/local/bin - Add Apache Spark to your PATH environment variable by executing the following command.

export SPARK_HOME=/usr/local/bin/spark-2.4.4-bin-hadoop2.7

export PATH=$SPARK_HOME/bin:$PATH - Execute the following command to verify the steps.

cd $SPARK_HOME/bin

spark-submit –version

cd $HOME

spark-submit –version - Use a browser running inside the VM to download Rumble.

- Create a directory from where Rumble is executed (for example $HOME/pega8/rumble).

- Move the downloaded

spark-rumble-1.0.jarinto the directory you created.

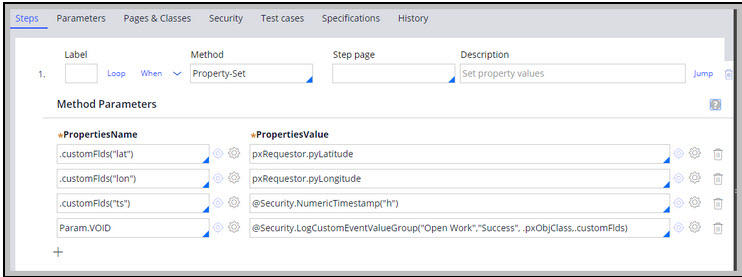

Define Rule-Utility-Functions in Rule-Utility-Library named Security

Security • LogCustomEventValueGroup() Void

- Parameters: eventType String, outcome String, message String, customFlds ClipboardProperty

- Description: customFlds must be a Text ValueGroup. Call this Function when wanting to log a custom Security Event

Security • NumericTimestamp() String

- Parameters: unit String, allowed values = "h", "m", "s"

- Description: Returns the current timestamp in units of hours, minutes, or seconds as a String

Override FSG-Booking-Work OpenDefaults Extension Point

Enable Geo-Location and Custom Security Events logging

The Chrome browser does not support Geolocation requests unless the server is secured. Chrome allows Geolocation requests within a Linux VM if its IP address is mapped to the name localhost.

Example:

|

|

- Enter the ifconfig IP address as the first entry for localhost.

- Test by executing ping localhost.

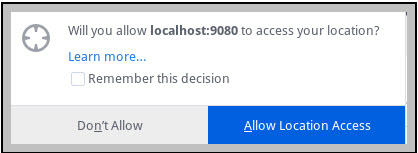

The When rule, pyGeolocationTrackingIsEnabled, must return true.

The When rule applied to Data-Portal displays the following pop-up window after login.

- Click Allow Location Access.

- Check for the pxLatitiude and pxLongitude set within the pxRequestor page on your Clipboard.

-

Tip: For best results, use Firefox as your browser.

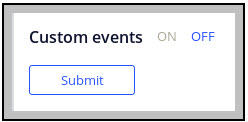

- Click Security > Tools > Security > Security Event Configuration to launch the Security Event Configuration landing page.

- At the bottom of the landing page, click ON to enable custom events.

- Click .

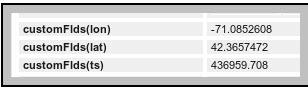

- Open an Event case for review, and check pyWorkPage on the Clipboard or Trace OpenDefaults to see the values for the customFlds Text Value Group, as shown in the following image.

- Click System > Operations > Logs.

- To the right of SECURITYEVENT, next click the Text link, click Log Files.

- If challenged, enter the user name admin and the password admin.

- Open the SecurityEvent.log file to see whether the customFlds values have been recorded.

Remove Quotes Around Numeric Values (necessary for JSON format)

Use the following steps to remove quotes around numeric values (click here for source).

- Copy and paste the text that you want the regular expression to examine.

- Replace each numeric value with

(-?[0-9]*\.[0-9]*)if the value can be negative, or ([0-9]*\.[0-9]*)if the value cannot be negative. - Use \1, \2, and so on, to specify which parsed value goes where.

| BEFORE text.txt |

|---|

|

{"id":"25538d8d-2861-40a9-b6df-d289e4b73a7e","eventCategory":"Custom event","eventType":"FooBla","appName":"Booking","tenantID":"shared","ipAddress":"127.0.0.1","timeStamp":"Mon 2019 Aug 05, 19:31:42:060","operatorID":"Admin@Booking","nodeID":"ff9ef7835fd4906aea82694c981938d0","outcome":"Fail","message":"FooBla failed","requestorIdentity":"20190805T192510","lon":"-98.0315889","lat":"30.123275"} |

| AFTER text.txt |

|---|

|

{"id":"25538d8d-2861-40a9-b6df-d289e4b73a7e","eventCategory":"Custom event","eventType":"FooBla","appName":"Booking","tenantID":"shared","ipAddress":"127.0.0.1","timeStamp":"Mon 2019 Aug 05, 19:31:42:060","operatorID":"Admin@Booking","nodeID":"ff9ef7835fd4906aea82694c981938d0","outcome":"Fail","message":"FooBla failed","requestorIdentity":"20190805T192510","lon":-98.0315889,"lat":30.123275} |

Replace newlines within an entire file, not one line at a time

If newlines exist in a file read by a Unix script, each line is processed individually. You must treat the entire file as a single large String, and then replace the new lines within that String (click here for source).

The output of the newline-removing sed command is piped to sed again, this time to replace: }<space>{ with: }<comma>{

|

sed -e ':a' -e 'N' -e '$!ba' -e 's/\n/ /g' PegaRULES-SecurityEvent.log | sed 's/} {/},{/g' |

Have one file, test2.jq, act as the start of the query including the JSON array left bracket: let $log := [

Have a second file, restof_test1.jq, contain the end of the JSONiq query starting at the JSON array's right bracket.

- The script initializes a new query file using >

test2.jq - Then output the back-to-back sed commands using >>

test2.jq - Then appends restof_test1.jq using >>

test2.jq

| JSONLogToArray.sh |

|---|

|

#!/usr/bin/env bash set -x echo "let \$log := [" > test2.jq cat PegaRULES-SecurityEvent.log | sed -r 's/"lon":"(-?[0-9]*\.[0-9]*)"/"lon":\1/' \ | sed -r 's/"lat":"(-?[0-9]*\.[0-9]*)"/"lat":\1/' \ | sed -r 's/"ts":"([0-9]*\.[0-9]*)"/"ts":\1/' \ | sed -e ':a' -e 'N' -e '$!ba' -e 's/\n/ /g' \ | sed 's/} {/},{/g' >> test2.jq cat restof_test1.jq >> test2.jq |

Next is the remainder of the JSONiq query (restof_test1.jq). The distance between two coordinates is computed in miles (3958.8 is the radius of the earth in miles), and then divided by the time difference between the two log file entries. The timestamp is computed as hours since 1-1-1970 GMT.

The filter condition ensures pairs of rows are only examined when:

- The eventType is Open Work (see FSG-Booking-Work OpenDefaults override above)

- The operatorID values within the two records are identical.

- The latitudes in both rows are > 20 (this is used to ensure that both rows contain geolocation coordinates. Any comparison can suffice).

- The timestamp in the second row is in the future of the timestamp in the first row (this avoids redundant calculation where the velocity ends up negative).

| restof_test1.jq |

|---|

|

let $pi := 3.1415926 let $join := for $i in $log[], $j in $log[] where $i.eventType = "Open Work" and $i."id" != $j."id" and $i."operatorID" = $j."operatorID" and $i.lat>20 and $j.lat>20 and $j.ts>$i.ts let $lat1 := $i.lat let $lon1 := $i.lon let $lat2 := $j.lat let $lon2 := $j.lon let $dlat := ($lat2 - $lat1) * $pi div 180 let $dlon := ($lon2 - $lon1) * $pi div 180 let $rlat1 := $lat1 * $pi div 180 let $rlat2 := $lat2 * $pi div 180 let $a := sin($dlat div 2) * sin($dlat div 2) + sin($dlon div 2) * sin($dlon div 2) * cos($rlat1) * cos($rlat2) let $c := 2 * atan2(sqrt($a), sqrt(1-$a)) let $distance := $c * 3958.8 let $tdiff := $j.ts - $i.ts let $mph := $distance div $tdiff return { "id" : $j.id, "eventType" : $j.eventType, "distance":$distance, "mph":$mph} return [$join] |

Send the JSONiq query to Apache Spark as opposed to executing commands using shell mode

Ideally, there is an easier way to send the queryfile content to Apache Spark and Rumble than rather than open the file, select all, copy, and paste into Rumble's command line (click here for source).

- Instead of:

--shell yes - Use:

--query-path file.jq --output-path results.out - In a directory where the Rumble .jar exists on a system where Apache Spark is installed, execute the following command:

|

pega8@Lubuntu1:~/rumble$ spark-submit --master local[*] --deploy-mode client spark-rumble-1.0.jar --query-path "test2.jq" --output-path "test2.out" |

| Example test2.out |

|---|

|

[ { "id" : "f5b07887-11ef-4f6f-9f0f-efb060bd3cd7", "eventType" : "Open Work", "distance" : 31.6128421996284034764, "mph" : 2634.4035166357 } |

The example test2.out can be interpreted as:

Two Security Events were recorded in close succession. To have traversed the distance of 31.6 miles (50.9 km) within the time between when those two Security Events were logged requires someone to have traveled at a speed of 2634 miles per hour (4329 km/hr).