Testing your application

4 Tasks

40 mins

Scenario

For an Assistance Request case, when users enter the vehicle make, one or more associated models are displayed in the Model drop-down list. Intermittently, users report that during the Submit request process, some car makes do not have an associated model name in the drop-down list when entering the vehicle information. Stakeholders need to easily identify the vehicle makes with at least one associated model.

Additionally, stakeholders are concerned about application performance. To ensure that customers can complete Assistance Request cases efficiently, stakeholders require that the vehicle model list is generated within 200 milliseconds and that the user interface and end-to-end process flow functions correctly.

To meet these requirements, your deployments manager has asked you to:

- Create a unit test to test the vehicle model list and confirm the model list generates in the time threshold.

- Create a scenario test to automate testing for the Assistance Request case type.

- Run a Test Coverage session using the unit test and scenario test and report the results.

The following table provides the credentials you need to complete the challenge.

| Role | User name | Password |

|---|---|---|

| Application Developer | tester@gogoroad | pega123! |

Note: Your practice environment may support the completion of multiple challenges. As a result, the configuration shown in the challenge walkthrough may not match your environment exactly.

Challenge Walkthrough

Detailed Tasks

1 Run the List Vehicle models data page and test different Make values

- In the Pega instance for the challenge, enter the following credentials:

- In the User name field, enter tester@gogoroad.

- In the Password field, enter pega123!.

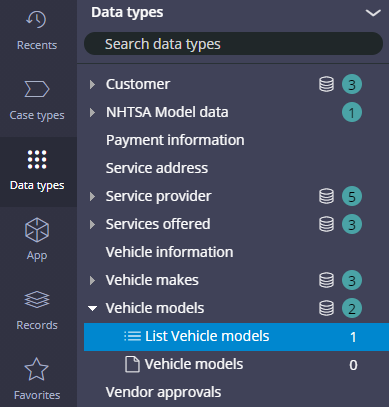

- In the navigation pane of Dev Studio, click Data types to open the Data Explorer.

- Expand Vehicle models, and then click List Vehicle models to edit the D_VehiclemodelsList data page.

Note: If the List Vehicle models data page is not displayed in the Data Explorer, click Options > Refresh to refresh the explorer.

- In the upper right, click Actions > Run. The Run Data Page window is displayed.

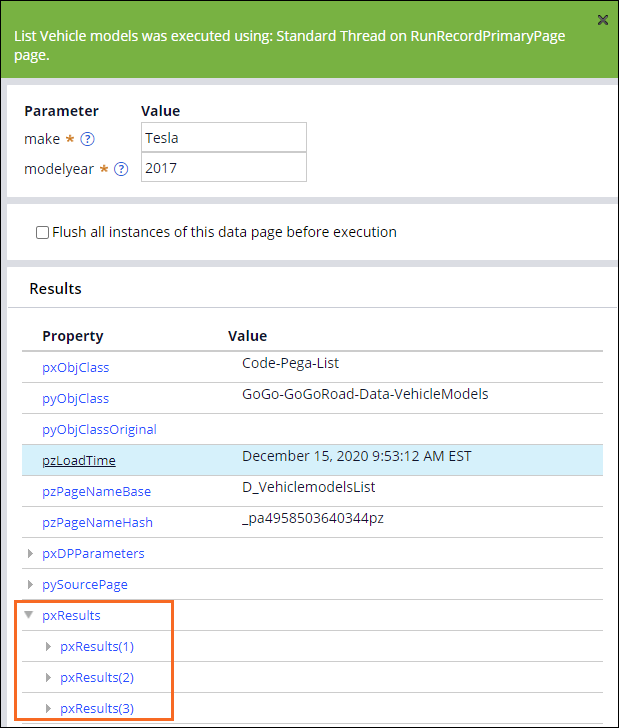

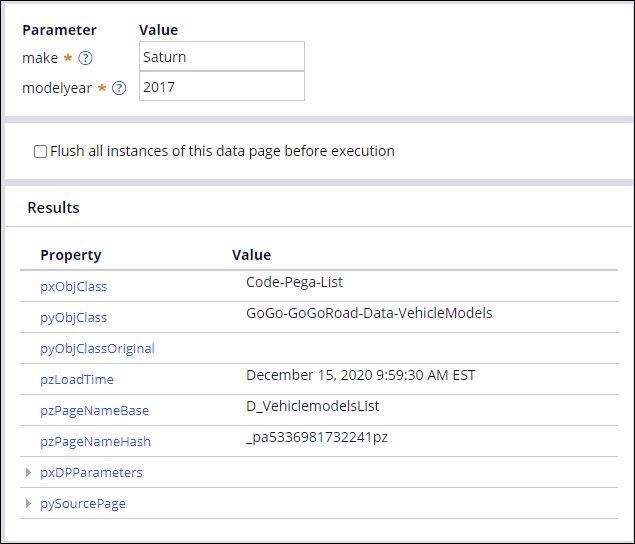

- For the make parameter, in the Value field, enter Tesla as the test value.

- For the modelyear parameter, in the Value field, enter 2017 as the test value.

- In the upper right, click Run to test the data page.

- Expand the pxResults property and confirm that the property includes three results pages.

-

For the make parameter, in the Value field, enter Saturn, and then click Run. The pxResults property is no longer displayed, which confirms that not all vehicle makes have at least one associated model.

2 Create a unit test

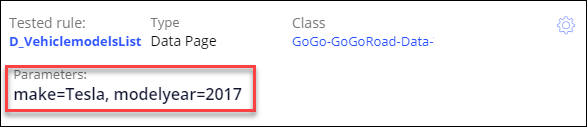

- In the Run Data Page window, for the make parameter, in the Value field, enter Tesla.

- For the modelyear parameter, in the Value field, enter 2017.

- In the upper right, click Run. The Convert to test button is displayed.

- Click Convert to test to close the Run Data Page window and create a test case record for the data page that uses your test results.

Note: The parameter values entered in the Run Data Page window are automatically populated in Edit Test Case.

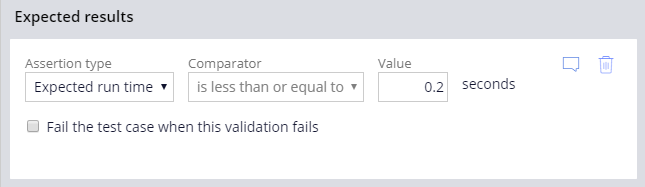

- In Edit Test Case, in the Expected results section, verify that the Assertion type list value is Expected run time.

- In the Value field, enter 0.2 to set the passing threshold for the unit test to 0.2 seconds.

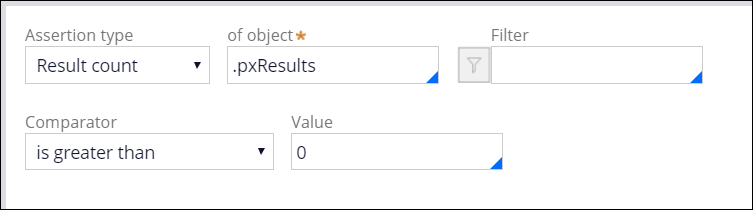

- In the second Assertion type list, select Result count.

- In the of object field, enter or select .pxResults.

- In the Comparator list, select is greater than.

- In the Value field, enter 0. The unit test validates that at least 1 record is returned from the data page when the Make parameter value is Tesla.

- Click Save.

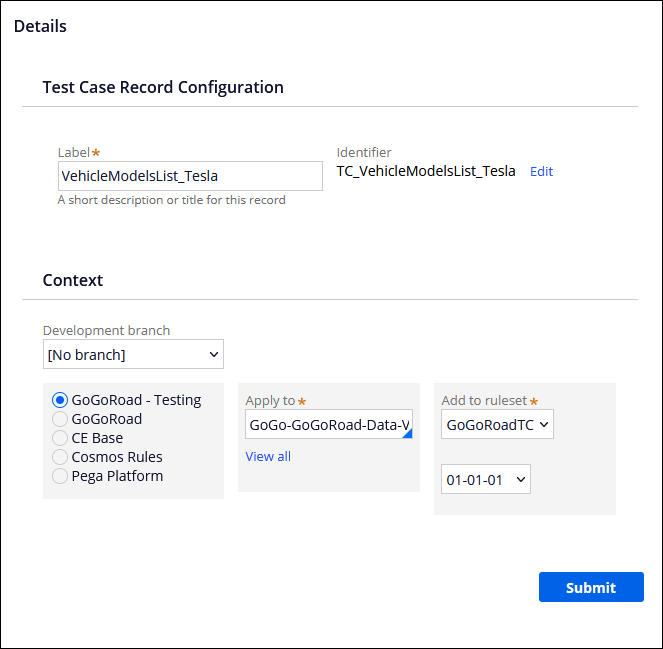

- In the Label field, enter VehicleModelsList_Tesla to name the test case record.

- In the Development branch field, verify that [No branch] is selected.

- In the Add to ruleset and version lists, verify that the highest ruleset version is selected.

- Click Submit to create the unit test.

3 Create unit tests for different vehicle makes

- In the VehicleModelsList_Tesla test case, to the right of Save, click the down arrow.

- Click Save as.

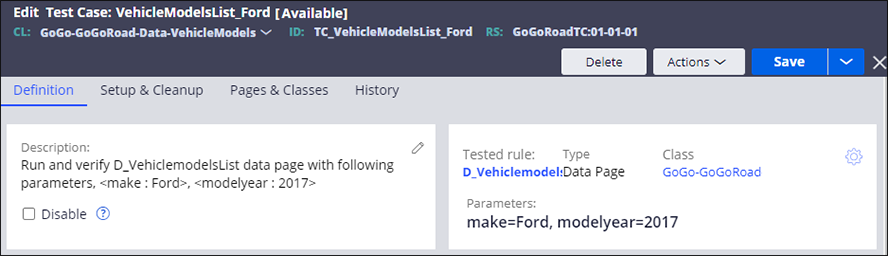

- In the Label field, enter VehicleModelsList_Ford.

- Click Create and open to save a copy of the test case.

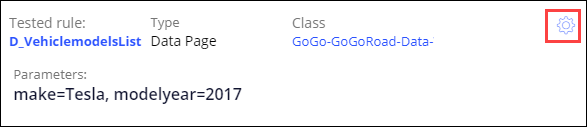

- To the right of Class, click the Gear icon to open the Edit details window and update the rule parameters.

- In the Parameter(s) sent section, in the make field, enter Ford.

- Click Submit to close the Edit details window.

- In the Description section, click the Edit icon to edit the vehicle make.

- In the parameters area, enter Ford to replace the Tesla value for <make: Tesla>.

- Click Save to complete the configuration of the unit test.

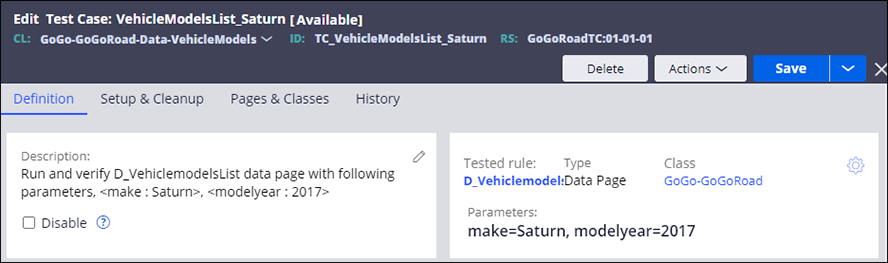

- Repeat steps 1-10 to use VehicleModelsList_Saturn as the test case label and Saturn as the make parameter.

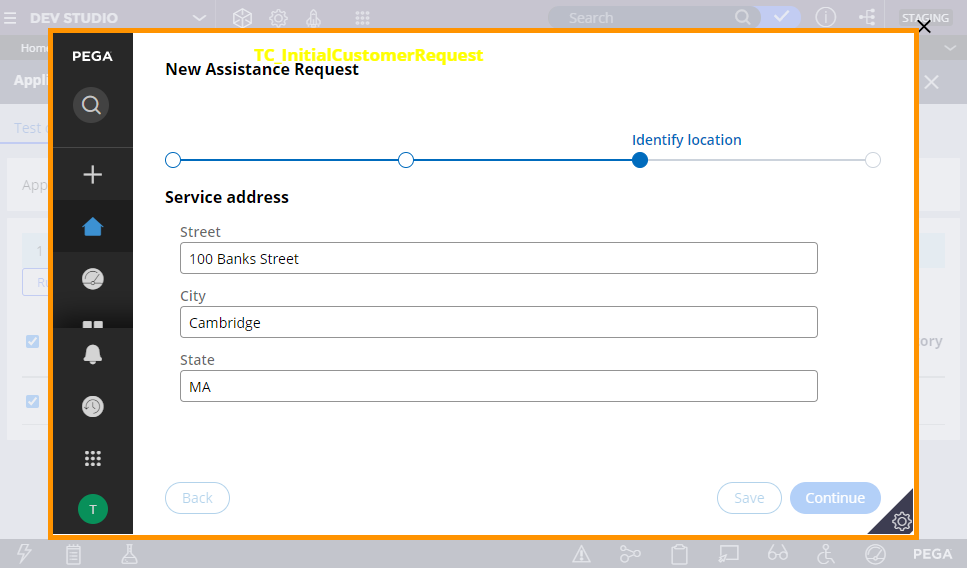

4 Record a new scenario test case

- In the header of Dev Studio, click Launch Portal > User Portal to open a new browser tab or window with the user view of the GoGoRoad application.

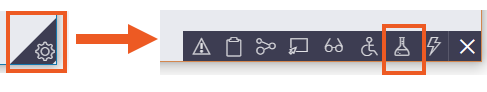

- In the lower right of the screen, click the Runtime toolbar icon, and then click the Automation Recorder icon to open the Scenario tests pane.

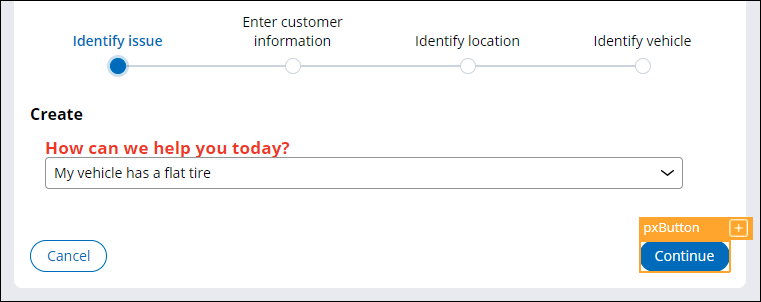

-

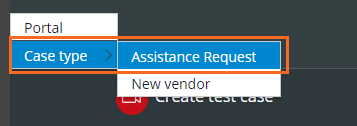

In the Scenario tests pane, click to begin recording your actions on a case type.

-

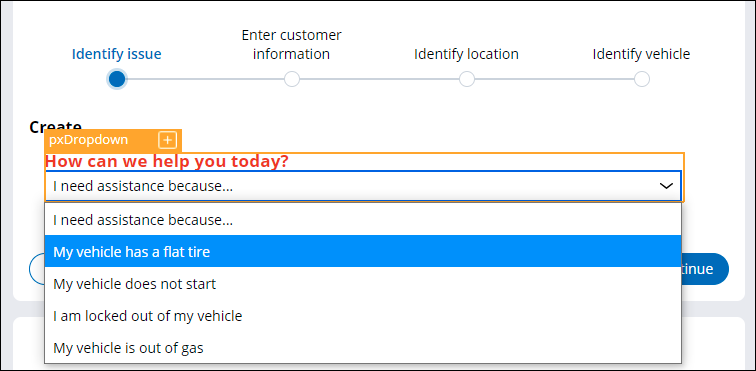

In the Create view, in the How can we help you today? list, select My vehicle has a flat tire.

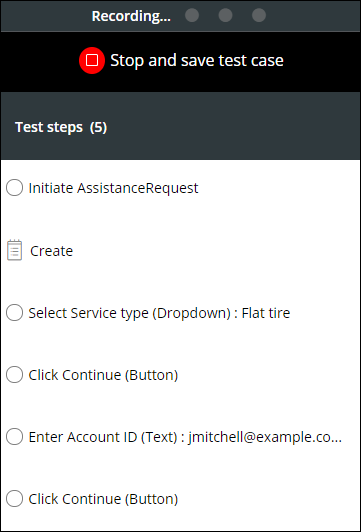

Note: An orange border is displayed around all objects you interact with to indicate that the Automation Recorder tool is recording the actions you take in this scenario. In the contextual pane, the actions you perform are recorded in the order in which you perform them. -

Click Continue to advance to the Enter customer information form.

- In the Enter customer information form, in the Account ID list, select [email protected].

- Click .

- Complete all fields on the Identify location and Identify vehicle forms.

- In the Scenario tests pane, click to stop recording your actions.

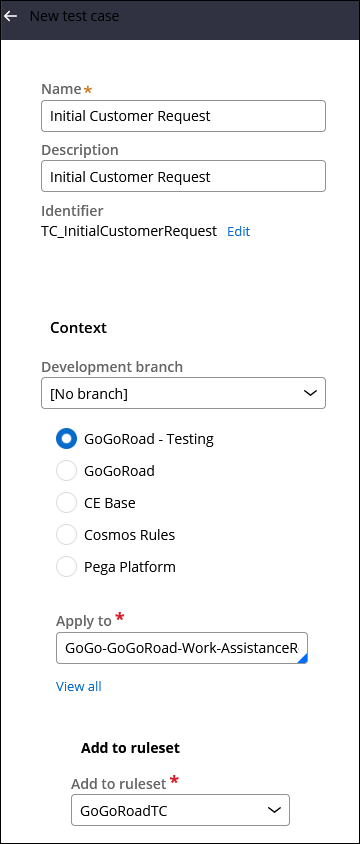

- In the New test case pane, enter or verify the following information:

Field or drop-down Value Name Initial Customer Request Description Initial Customer Request Development branch [No branch] Apply to GoGo-GoGoRoad-Work-AssistanceRequest Add to ruleset GoGoRoadTC -

Click to save the new scenario test case.

- In the lower-left corner, click the User icon > Log off to log out of the User Portal and return to Dev Studio.

Confirm your work

Specify the application for Test coverage data capture

- On the Configure menu, click Application > Quality > Settings

- Click Include built-on applications, and then select GoGoRoad to include the application for Test coverage.

- Click .

Start a new Test Coverage session

- Click Configure > Application > Quality > Test Coverage to open the Test coverage screen.

- Click Start new session.

- In the Title field, enter Session1 for the name of the session.

- Click to begin the Test coverage session.

Run the Unit test

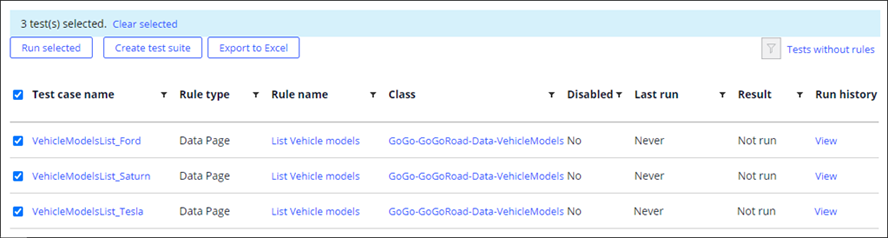

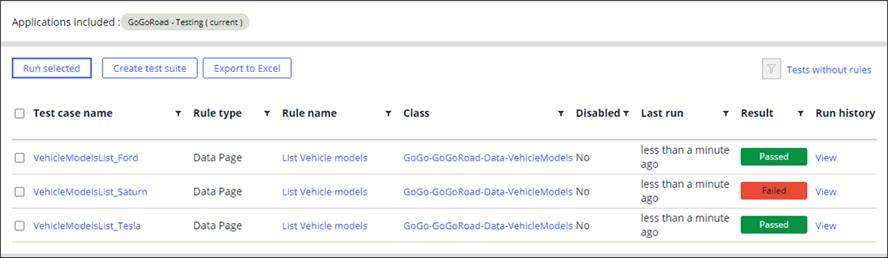

- On the Configure menu, click Application > Quality > Automated testing > Unit testing > Test cases to display all the unit tests that you created for the GoGoRoad application.

- To the left of the Test case name column, select the check box to select all the test cases.

- Click to execute the unit tests.

- In the Result column verify that the Tesla and Ford unit test cases passed and the Saturn unit test case failed.

- To the right of Failed, click View to see additional information and investigate why the Saturn test case failed. The Test Runs Log dialog box is displayed.

- Click the test result to show the test results for VehicleModelsList_Saturn.

Run the scenario test

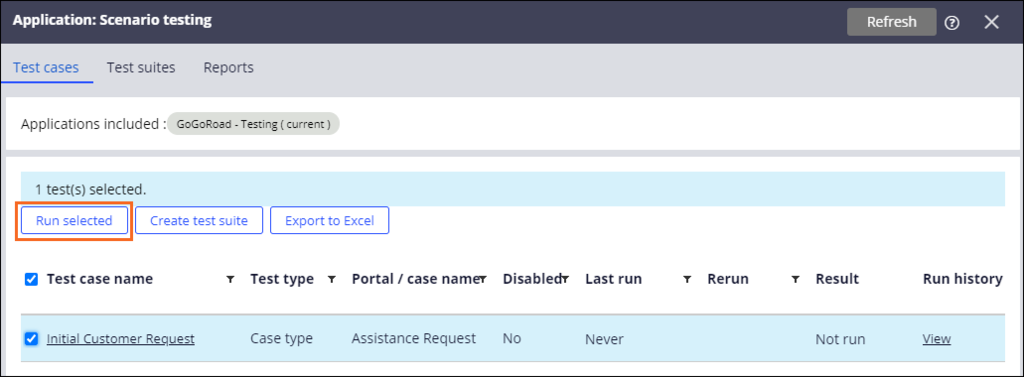

- In the header of Dev Studio, click to view the list of test cases for the application.

- In the Scenario testing view, select the Initial Customer Request checkbox.

- Click to execute the test.

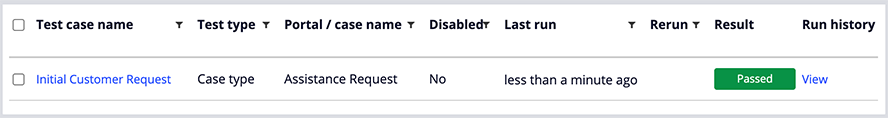

- In the Result column, verify that the result is Passed to signify the test case is successful.

Stop the Test Coverage session and view results

- Return to the Application: Test coverage tab.

- Click Stop coverage.

- Click Yes to stop the coverage and generate report.

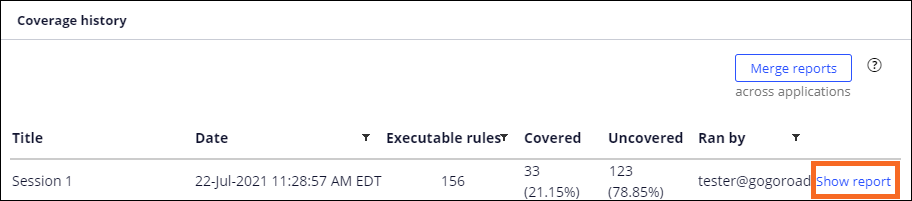

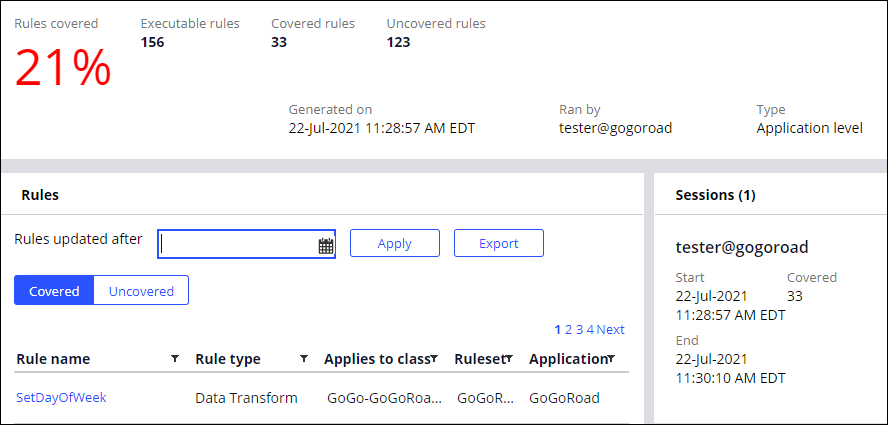

- In the Coverage history section, click Show report for the Test coverage session you just ended.

- View the results of the Test coverage report.

Note: Because this challenge environment supports the completion of multiple challenges, the results of your Test Coverage Report may vary from what is shown above. It should be greater than 0% and show values for Executable rules, Covered rules, and Uncovered rules.

This Challenge is to practice what you learned in the following Module:

Available in the following missions:

If you are having problems with your training, please review the Pega Academy Support FAQs.

Want to help us improve this content?